Linear · works for separable data, fails for curved data

Why raw pixels break a linear classifier

Pop quiz · which model would you pick?

A friend asks · "I have

(a) Logistic regression on raw pixel intensities.

(b) Hand-engineer SIFT/HOG features, then SVM.

(c) Train a CNN end-to-end.

Stop and decide before the next slide. Why did you pick what you picked?

There is no trick · we want you to feel where each option helps and hurts. The next slides explain why the right answer changed in 2012 — and why this whole course exists.

Classical ML vs deep learning

Deep learning, in one sentence

Deep learning = representation learning with differentiable, composable modules, trained end-to-end by gradient descent.

Every word matters. We will unpack all of them this semester.

What representation learning buys you

Classical ML:

Deep learning:

The key change is not "more parameters". It is that each layer learns a transformation: pixels become edges, edges become parts, parts become object evidence. Good representations keep task-relevant variation and suppress nuisance variation.

Three eras of deep learning

ImageNet · the turning point (2012)

| Year | Winner | Top-5 error | Method |

|---|---|---|---|

| 2010 | NEC-UIUC | 28.2% | SIFT + Fisher |

| 2011 | XRCE | 25.8% | Hand-crafted |

| 2012 | AlexNet | 16.4% | 8-layer CNN |

| 2015 | ResNet | 3.6% | 152-layer CNN |

Human top-5 error: ~5.1%. AlexNet cut error by ~10 points in one year.

Why now? Three ingredients compounded

Why now · concrete numbers

| 1990 | 2024 | growth | |

|---|---|---|---|

| Compute ($/FLOP best-case) | $\sim$10⁸ FLOPs/$ | $\sim$10¹⁵ FLOPs/$ | |

| Datasets (frontier) | 60k MNIST digits | 5T LLM tokens | |

| SOTA model params | LeNet (60k) | Llama-3 405B |

Three exponential ramps compounding for 30+ years. Algorithms that were "impossible" in 1990 became cheap in 2012 and routine by 2020. Deep learning didn't get smarter — the world got faster.

The 2012 ImageNet jump was the moment all three crossed the line at once.

Learning outcomes · for this lecture

By the end of this lecture you will be able to:

- Articulate why DL works now but did not earlier: compute, data, algorithms.

- Describe representation learning as learning transformations, not just classifiers.

- Explain why stacked linear layers collapse and why nonlinear layers do not.

- Compute a small MLP's forward pass, parameter count, and tensor shapes.

- Derive why softmax + cross-entropy gives gradient

- State the three axes that make deep networks useful: expressivity, trainability, generalization.

Course roadmap

24-lecture arc

Foundations → optimization → regularization → CNNs → detection → sequences → attention → Transformers → LLMs → vision-language → VAEs → GANs → diffusion → efficient inference.

- Framework: PyTorch, exclusively.

- Primary textbook: Prince, Understanding Deep Learning (2023) — free PDF.

- Style: math + code + intuition + examples.

- Assessment: 4 quizzes · 4 assignments · attendance · bonus.

PART 2

MLP recap

The building block you already know — tightened up

Our running example

Tying back to Lecture 0 · what changed

In L0 we showed every loss is NLL of an assumed conditional distribution ·

| Task | Conditional | Loss |

|---|---|---|

| Regression | MSE | |

| Binary classification | BCE | |

| CE |

Logistic regression and softmax classification are 1-layer neural nets. You've already met the entire framework. Today we add hidden layers so the conditional distribution can be a more complex function of

The probabilistic story is unchanged · pick a distribution, write NLL, optimize. Only

From linear models to neurons

You already know linear regression / classification from ES 654:

A neuron is just this · plus a non-linear "squashing" function:

That's the only new ingredient. Everything in this course builds on top · stack many of these neurons, learn the weights, you get a deep network.

Worked example · one neuron forward pass

Input ·

Pre-activation ·

Activation (sigmoid) ·

This 1-neuron, 2-input setup is exactly what we just had in linear regression · plus the sigmoid making it 0–1 instead of any real number. Stack 500 of these and you have an MLP layer.

The single neuron — anatomy

In vector form

Q. Why the non-linearity? What breaks without it?

Why we need a non-linearity · the magnifying-glass analogy

Stack two magnifying glasses · you get a bigger image. Still just a bigger linear version of the original.

Stack two linear layers · same story. The composition of linear maps is just another linear map. No new patterns become learnable.

A non-linearity is like adding a prism · it bends the input in a way no linear stack can replicate. Each layer can learn a new kind of feature.

This is why every deep network has activation functions between linear layers. Without them, depth is wasted.

Let's prove it · stacking linear layers collapses

A tiny 2-layer network with no nonlinearity:

Layer 1 ·

Layer 2 ·

Substitute h into the second equation:

Distribute

Define ·

The 2-layer network is exactly one linear layer. The depth was useless.

Worked numeric example · the collapse

Forward through the 2 layers:

Equivalent single layer:

- Check ·

Without σ · depth gives nothing

XOR · the canonical "linear can't, MLP can" example

XOR · 2D binary inputs, output

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

XOR is the historical "minor scandal" that broke perceptrons in the 1969 Minsky-Papert critique — and motivated the multi-layer / hidden-unit revolution of the 1980s.

Try mentally · can you find a single line

XOR · linear fails, two-layer MLP succeeds

Left · Three attempts at a linear separator. Whichever line you draw, two same-class points end up on opposite sides.

Right · A 2-layer MLP with 2 hidden ReLU units carves out a curved decision region · all four points correctly classified.

XOR · build a 2-layer MLP by hand

A 2-input, 2-hidden, 1-output MLP with ReLU ·

Choose by hand ·

Outputs

Feature-space transformation · the deeper view

A hidden layer reshapes the data so the next (linear) layer's job becomes easy.

Left · two interlocking spirals · no straight line separates them.

Right · the same data after a learned hidden layer · spirals are unrolled into bands that a linear classifier separates trivially.

This is what people mean by "deep learning is representation learning." The hidden layers learn a coordinate system in which the problem becomes linear. The final softmax is just classical logistic regression — applied to features the network designed for itself.

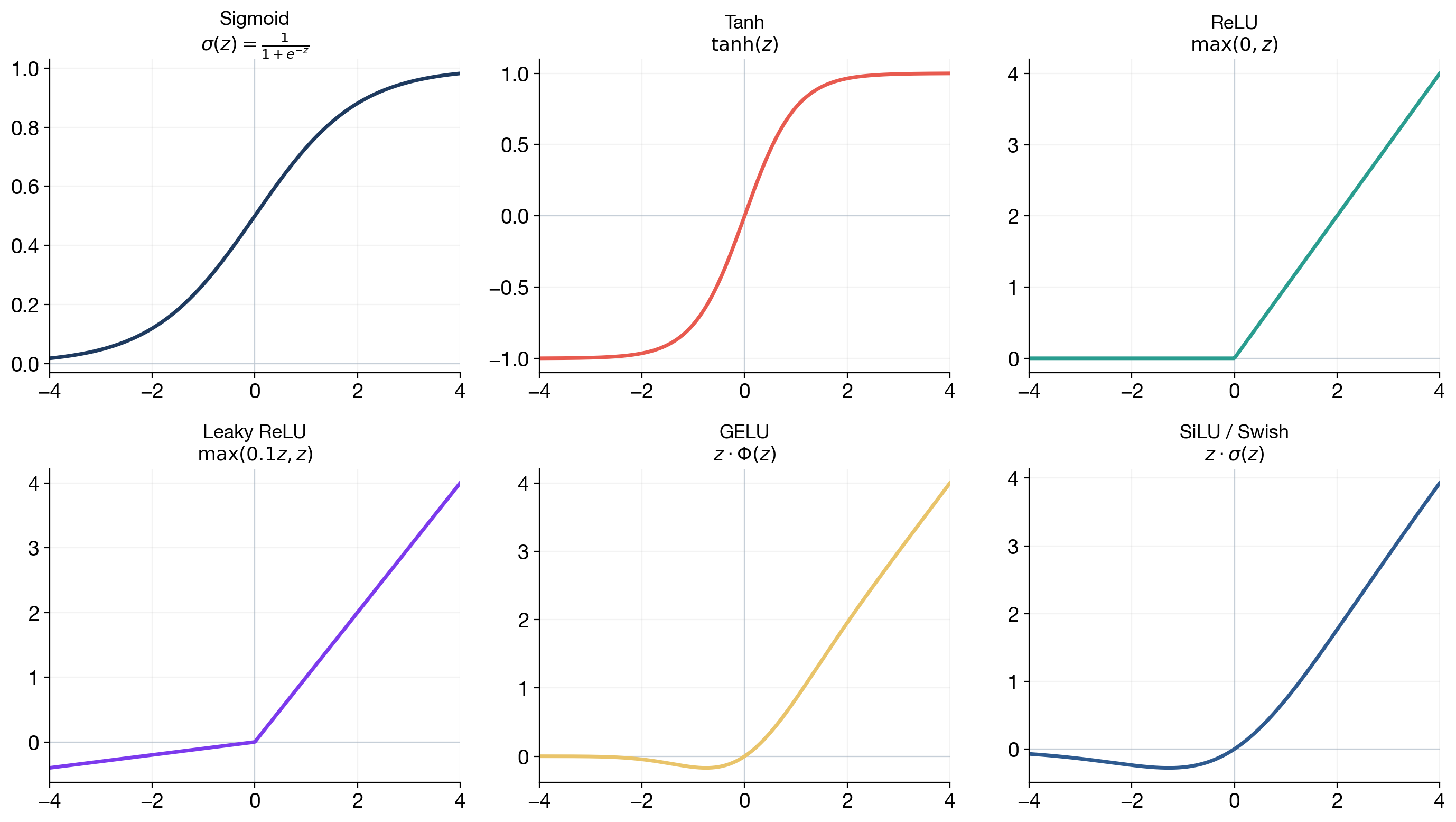

Activation functions at a glance

| Name | Formula | Where you see it |

|---|---|---|

| Sigmoid | gates | |

| Tanh | RNNs | |

| ReLU | most CNNs | |

| GELU / SiLU | Transformers, LLMs |

Activation functions · what can go wrong

| Activation | Failure mode | Practical consequence |

|---|---|---|

| Sigmoid | Saturates; derivative |

vanishing gradients |

| Tanh | Saturates for large $ | z |

| ReLU | zero gradient for |

dead units if updates are harsh |

| GELU / SiLU | smoother but costlier | common in Transformers, less common in tiny CNNs |

An activation is not decoration. It controls both the representation and the backward gradient flow. Modern defaults are ReLU-family for CNNs and GELU / SwiGLU-family for Transformers.

Stacking neurons → MLP

Parameter count — do this in your head

For MNIST with hidden sizes 256, 256:

A tiny MNIST model has ~270k parameters. GPT-3 has 175 billion —

Batched matrix form · the shapes that matter

For a mini-batch:

MNIST batch of 64:

| Tensor | Shape |

|---|---|

| flattened input |

|

| first weight |

|

| hidden activations |

|

| final logits |

Most PyTorch bugs in early DL are shape bugs. Check the batch dimension first.

MLP in PyTorch

import torch.nn as nn

class MLP(nn.Module):

def __init__(self, d_in=784, d_h=256, d_out=10):

super().__init__()

self.net = nn.Sequential(

nn.Linear(d_in, d_h), nn.ReLU(),

nn.Linear(d_h, d_h), nn.ReLU(),

nn.Linear(d_h, d_out), # raw logits — no softmax here

)

def forward(self, x):

return self.net(x)

Q. Why no activation after the last Linear?

The last layer is bare · the #1 beginner bug

For classification:

- Output should be

nn.CrossEntropyLossinternally applieslog_softmax.- Adding your own softmax → double softmax → frozen loss near

Symptom to memorize: training loss stuck at ~2.30 (=

Fix: remove the extra softmax.

PART 3

Losses and backprop

The math that makes learning possible

From scores to probabilities · the goal

The network outputs raw "scores" (logits) for each class · arbitrary real numbers like

For classification, we need a valid probability distribution · all values

Two problems to solve:

- Make values positive ·

exp(·)does this for any real input. - Make them sum to 1 · divide by the total.

Together · the softmax function.

Softmax · worked numeric example

Logits ·

Step 1 · exponentiate ·

Step 2 · sum ·

Step 3 · normalize ·

Note · the relative ranking is preserved (the largest logit becomes the largest probability) · but the values are now interpretable as probabilities. The softmax is the standard last layer for classification.

Softmax · three acts

Why exponentiate?

- Logits can be negative; raw ratios misbehave.

- Softmax amplifies differences — biggest logit dominates smoothly.

- It falls out of maximum likelihood for categorical outputs (next).

Cross-entropy from MLE

One example

With one-hot

This is cross-entropy. MLE hands it to us for free — we did not invent it.

Why cross-entropy is the right score

Accuracy only asks whether the top class is correct. Cross-entropy asks whether the whole probability distribution is honest.

Suppose the true class is class 0:

| Prediction | CE loss |

|---|---|

But if the label is class 1:

| Prediction | CE loss |

|---|---|

Cross-entropy rewards calibrated confidence and punishes confident wrong answers. That is why it is a proper scoring rule for classification.

Push-pull intuition · the gradient

Logits

- If

- If

The next slide derives the formula. The result ·

Deriving · softmax + CE gradient · step by step

Setup ·

Want ·

Part 1 ·

Part 2 · case

Part 2 · case

Combine ·

Since

Worked numeric · the gradient

Logits

SGD step ·

The gradient is bounded between -1 and 1 per logit · stable. No exploding gradients from the loss itself.

The elegant softmax + CE gradient

Prediction minus target. Same form as logistic regression — no accident.

What that gradient actually looks like

Backprop · the blame game

The forward pass computes the loss. Backprop figures out who to blame.

For each weight, ask · how much did this weight contribute to the final error?

Walk backwards from the loss through the network · at each layer, distribute "blame" proportionally to how much each weight affected the layer's output. Adjust weights to reduce that blame.

That's the entire idea. The math (chain rule) is mechanical · the concept is "trace responsibility backwards."

Backpropagation · the computational view

Every network is a DAG of differentiable ops. Forward computes values; backward computes gradients in reverse order.

Backprop · the blame distributor

For a layer

We must figure out three things:

- How much was W's fault? ·

- How much was b's fault? ·

- How much should we blame the previous layer's output

The next slide does these one at a time, with full chain rule.

Deriving · the linear-layer backward pass

For one element ·

1. Bias ·

2. Weight ·

3. Input ·

These three lines are the entire backward pass for a Linear layer in PyTorch.

Worked numeric · linear-layer backward

Weight gradient ·

Bias gradient ·

Input gradient (passed to previous layer) ·

These three numbers are what loss.backward() computes for one Linear layer · in pure NumPy you could write it in 3 lines.

The local-gradient rule · three lines

For

These three lines are the entire backward pass of a linear layer. Everything else is repeating this rule through activations and stacking.

End-to-end worked numeric · 2-layer MLP, one example

Tiny 2-1-1 net with sigmoid hidden, sigmoid output. Input

Forward ·

- BCE loss

Backward (using the BCE+sigmoid identity

Update ·

Forward at step 1 ·

This is the entire training loop on one example, by hand. SGD does this for every (sample, weight) pair, every step, every epoch.

Same rule with batches

For a batch:

The linear-layer backward pass becomes:

Backprop is mostly matrix multiplication. PyTorch is not doing magic here; it is applying these local rules through the computation graph.

PART 4

Why go deep?

A teaser for Lecture 2

One layer is enough, in principle

A single hidden layer can approximate any continuous function (UAT — next lecture).

Q. So why do we ever use more than one?

Give an honest answer before turning the page.

Depth ⇒ hierarchical features

Biology inspired the hierarchy

Hubel & Wiesel (Nobel 1981) — the cat visual cortex is hierarchical.

| Brain region | Roughly analogous layer | Detects |

|---|---|---|

| V1 | early layer | oriented edges |

| V2 | next layer | textures, junctions |

| V4 | mid layer | shapes, parts |

| IT | late layer | objects, faces |

Biology suggested that hierarchical visual processing is useful. Modern neural networks are not literal brain models: the optimizer, data scale, loss function, and hardware are engineered.

Backprop as broken telephone

Think of backprop as a "telephone game" played backwards from the loss.

Each layer has to pass the error signal to the layer before it. If each layer multiplies the message by something less than 1 (e.g., 0.25 for sigmoid), then by the time the signal reaches the early layers it's a faint mumble.

Those early layers stop learning. This is the vanishing gradient problem · the topic of the next slide and a recurring theme through the course (RNN, deep MLPs, deep Transformers).

Why σ′ shrinks · let's compute it

For sigmoid ·

Maximum value · at

For

Every layer with sigmoid multiplies the backward-flowing gradient by something

Worked numeric · the gradient vanishes

A 5-layer sigmoid net. Assume all weights = 1 and inputs are in saturating regions so

| Layer | Local factor |

Gradient signal |

|---|---|---|

| Output | — | 1.0 |

| L4 | 0.1 | |

| L3 | 0.01 | |

| L2 | 0.001 | |

| L1 | 0.0001 |

The first layer gets

Depth has a cost · vanishing gradients

Each sigmoid factor ≤ 0.25:

| Depth |

upper bound on gradient magnitude |

|---|---|

| 5 | |

| 10 | |

| 20 |

Early layers effectively stop learning. This blocked depth for 20 years.

The fix · ReLU

For active neurons the gradient is exactly 1 — no shrinkage.

But ReLU alone is not enough for very deep networks:

| Problem | Tool |

|---|---|

| activation variance shrinks or explodes | Xavier / He initialization |

| gradients weaken through many layers | residual connections |

| optimization is scale-sensitive | normalization layers |

Lecture 2 derives why these tools make depth trainable.

PART 5

The training loop

The five lines you'll type every day this semester

The training cycle

The PyTorch training loop · code

model = MLP(784, 256, 10).to('cuda')

criterion = nn.CrossEntropyLoss()

optim = torch.optim.SGD(model.parameters(), lr=0.01, momentum=0.9)

for epoch in range(10):

for x, y in loader:

x, y = x.view(-1, 784).to('cuda'), y.to('cuda')

logits = model(x) # 1. forward

loss = criterion(logits, y) # 2. loss

optim.zero_grad() # 3. zero grads

loss.backward() # 4. backward

optim.step() # 5. update

Every real training script is a variation on this.

One common bug · zero_grad

Q. What if you forget optimizer.zero_grad()?

PyTorch accumulates gradients by default. Without zeroing, each backward adds to the previous .grad. After

The single most common PyTorch bug.

Train mode vs eval mode

Some layers behave differently during training and evaluation.

| Mechanism | model.train() |

model.eval() |

|---|---|---|

| Dropout | randomly masks activations | uses all activations |

| BatchNorm | updates running statistics | uses stored statistics |

| Autograd | tracks gradients if enabled | still tracks unless disabled |

model.eval()

with torch.no_grad():

logits = model(x_val)

For validation and test: use model.eval() and torch.no_grad(). Otherwise your measured performance may be noisy, slower, or wrong.

Train / val / test

Splits must match the real question

Random splitting is not always honest.

| Data type | Bad split | Better split |

|---|---|---|

| medical images | random image split | split by patient |

| video frames | random frame split | split by video / scene |

| recommender logs | random row split | split by user or time |

| documents | random paragraph split | split by document / source |

| sensors | random window split | split by device / location |

Validation should answer the question: "Will this model work on new cases we actually care about?"

Training loss ↓ ≠ model better

Overfitting · the rule

- Training loss monotonically drops.

- Validation loss drops, then rises.

- The gap is overfitting.

Never tune on the test set.

Test = final exam you take once.

Validation = practice exams, take many times.

Training = studying.

Putting it all together · the L01 master sentence

A neural network is just a stack of (linear → non-linearity) blocks · the linear layer mixes features, the non-linearity bends the space. Backprop assigns blame layer-by-layer using the chain rule, and SGD updates parameters in the descent direction.

| Step | What happens | Where it came from |

|---|---|---|

| 1 · Forward | composition of layers | |

| 2 · Loss | NLL of the chosen distribution (L00) | |

| 3 · Backward | chain rule on the comp. graph | |

| 4 · Update | SGD |

Sigmoid + BCE = L00's logistic-MLE, just with a hidden layer in front. Softmax + CE = L00's multiclass NLL. Same probabilistic story, more layers.

Practice problems

Try these on paper; verify with the notebooks.

P1. A 1-hidden-layer MLP for MNIST has

P2. Show that for sigmoid

P3. Build a 2-hidden-unit ReLU MLP that computes the AND function (

P4. A 3-class classifier outputs logits

P5. Why does PyTorch's CrossEntropyLoss take logits rather than probabilities as input? Give two reasons (numerical and gradient-related).

P6. A 10-layer sigmoid network has weights initialized as