Why K-Fold Works

| Single Split | K-Fold CV |

|---|---|

| Uses 80% for training | Uses 100% of data (across all folds) |

| One score (could be lucky) | K scores → average is more reliable |

| High variance | Low variance |

| Can't detect unstable models | Standard deviation shows stability |

Example: Score = 89% ± 1.5% tells us much more than just "89%"

K-Fold in sklearn

from sklearn.model_selection import cross_val_score

from sklearn.linear_model import LogisticRegression

model = LogisticRegression()

# 5-fold cross-validation

scores = cross_val_score(model, X, y, cv=5)

print(f"Scores per fold: {scores}")

# [0.87, 0.89, 0.91, 0.88, 0.90]

print(f"Mean: {scores.mean():.3f} ± {scores.std():.3f}")

# Mean: 0.890 ± 0.015

Choosing K: Trade-offs

| K | Name | Pros | Cons |

|---|---|---|---|

| 5 | 5-Fold | Fast, good default | Slightly higher variance |

| 10 | 10-Fold | More reliable estimate | 2x slower |

| n | Leave-One-Out | Uses maximum data | Very slow, high variance |

Rule of thumb: Use K=5 for quick experiments, K=10 for final evaluation.

But Wait... What About Hyperparameters?

New problem: We want to tune hyperparameters (like regularization C)

# Which C is best?

for C in [0.01, 0.1, 1, 10, 100]:

model = LogisticRegression(C=C)

score = cross_val_score(model, X, y, cv=5).mean()

print(f"C={C}: {score}")

Danger: We used the test folds to CHOOSE the best C!

Now our "test" score is biased — we've leaked information!

The Data Leakage Problem

What went wrong:

- We evaluated C=0.01 on folds → got a score

- We evaluated C=0.1 on folds → got a score

- We picked the C with best score

- We reported that score as "test accuracy"

But that score was used to MAKE A DECISION!

It's like a student seeing the test before the exam.

Data Leakage: A Hiring Analogy

Imagine you're hiring:

| Proper Process | Leaky Process |

|---|---|

| Screen resumes blindly | See interview performance first |

| Interview candidates | Then "predict" who'll do well |

| Measure: "How good am I at predicting?" | Cheating! You already know! |

In ML:

- Test set = the "real interview"

- Using test set to choose model = seeing answers first

- Your "accuracy" is now meaningless

If you make ANY decision based on test data, your evaluation is biased!

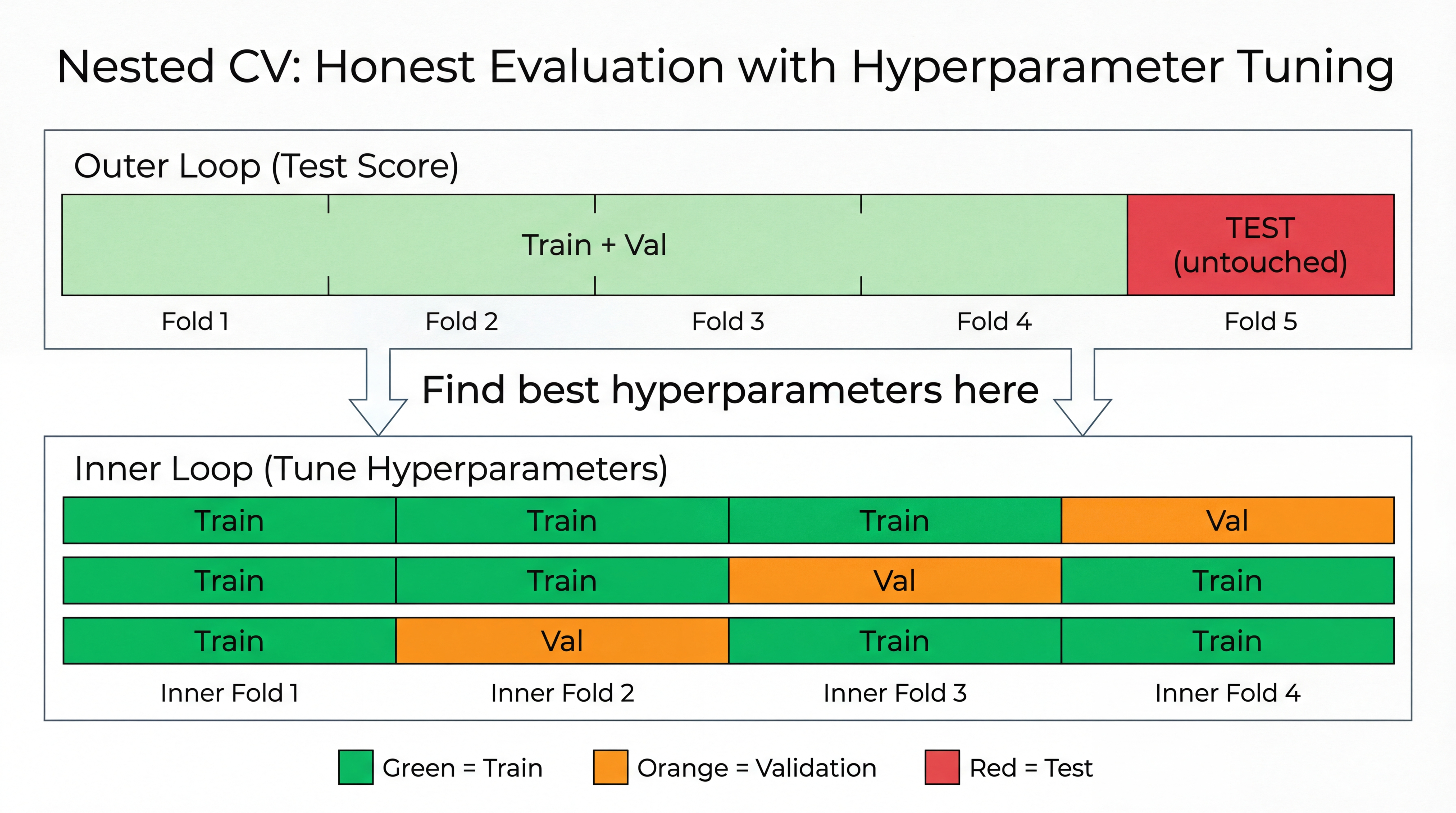

Nested Cross-Validation: The Fix

How Nested CV Works

| Loop | What It Does | Uses |

|---|---|---|

| Outer loop | Gives honest test score | Test fold (never touched during tuning) |

| Inner loop | Finds best hyperparameters | Train + Val folds only |

Process for each outer fold:

- Hold out test fold (don't touch it!)

- Run inner CV on remaining data to find best hyperparameters

- Train final model with best hyperparameters

- Evaluate on test fold → one honest score

Average all outer fold scores → reliable estimate!

Nested CV in sklearn

from sklearn.model_selection import cross_val_score, GridSearchCV

from sklearn.linear_model import LogisticRegression

# Inner loop: find best hyperparameters using 3-fold CV

param_grid = {'C': [0.01, 0.1, 1, 10, 100]}

inner_cv = GridSearchCV(LogisticRegression(), param_grid, cv=3)

# Outer loop: honest evaluation using 5-fold CV

scores = cross_val_score(inner_cv, X, y, cv=5)

print(f"Nested CV score: {scores.mean():.3f} ± {scores.std():.3f}")

# This score is honest — no data leakage!

Summary: When to Use What

| Situation | Method | Why |

|---|---|---|

| Evaluate a fixed model | K-Fold CV | Reliable score, no tuning needed |

| Tune hyperparameters + evaluate | Nested CV | No data leakage |

| Compare two models (no tuning) | K-Fold CV | Compare mean ± std |

| Final deployment | Retrain on ALL data | Use every example |

Golden rule: Never use the same data to both CHOOSE and EVALUATE your model!

Part 4: Practical Guidelines

Making Good Model Choices

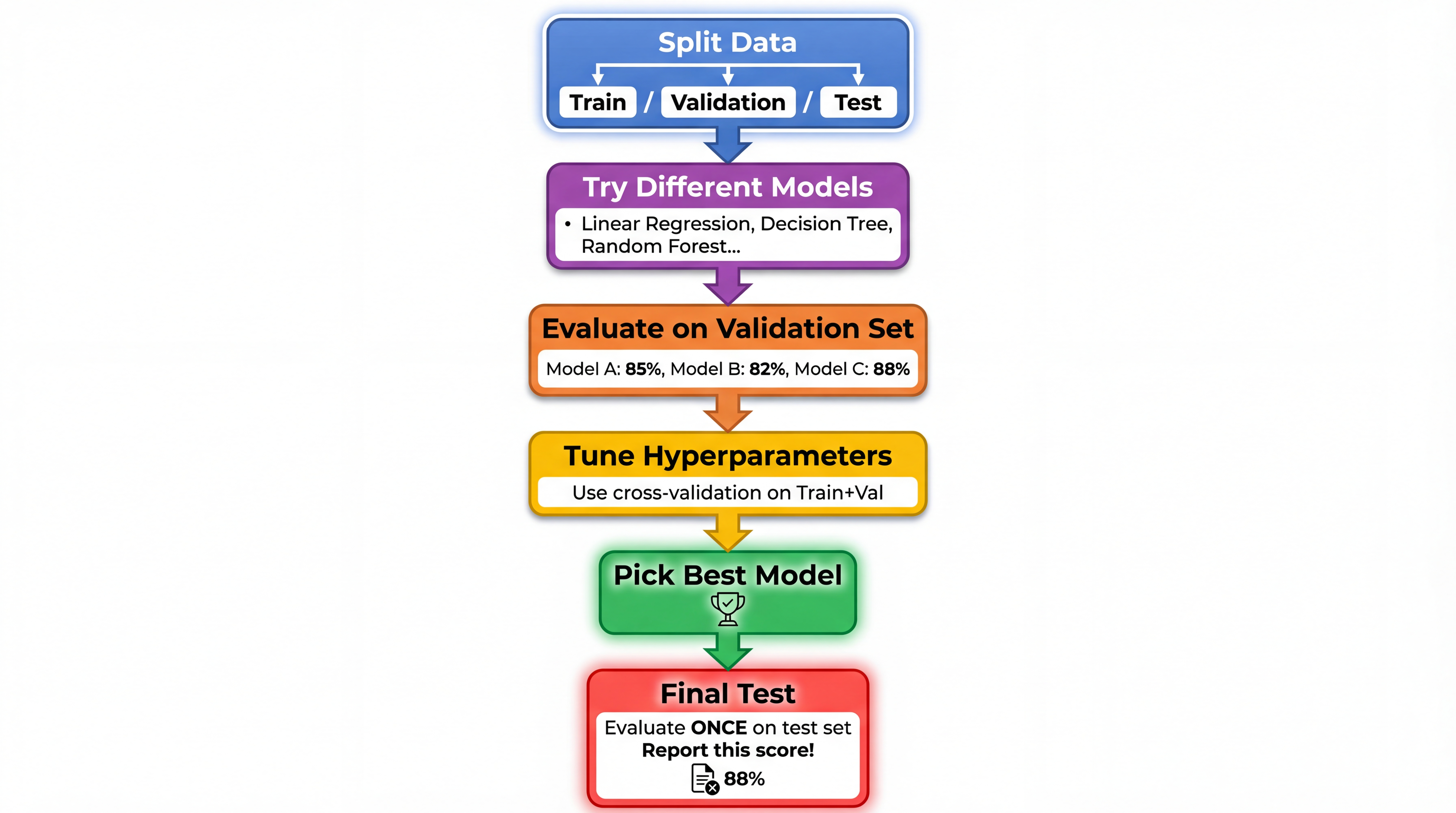

The Model Selection Workflow

Step-by-Step: Model Selection

| Step | Action | Tools |

|---|---|---|

| 1. Split | Separate test set (don't touch!) | train_test_split |

| 2. Explore | Try different model types | LogisticRegression, DecisionTree, etc. |

| 3. Compare | Use cross-validation | cross_val_score |

| 4. Tune | Find best hyperparameters | GridSearchCV |

| 5. Select | Pick best model | Look at mean ± std |

| 6. Final Test | Report honest score | Evaluate on test set ONCE |

Common Mistakes to Avoid

| Mistake | Why It's Bad | Fix |

|---|---|---|

| Testing on training data | Overly optimistic scores | Always use separate test set |

| Tuning on test set | Leaks information | Use validation set for tuning |

| Picking model by test score | Test set becomes validation | Use cross-validation |

| Reporting validation score as final | Not an honest estimate | Report test score |

| Testing multiple times | "Overfitting" to test set | Test only ONCE |

Common Hyperparameters

Hyperparameters = Settings YOU choose before training

| Model | Hyperparameter | What It Does |

|---|---|---|

| Linear Regression | - | None (that's its beauty!) |

| Logistic Regression | C |

Controls regularization |

| Decision Tree | max_depth |

Limits tree complexity |

| Neural Network | Learning rate | How fast to learn |

Simple Hyperparameter Tuning

# Try different max_depth values

for depth in [2, 3, 5, 10, None]:

model = DecisionTreeClassifier(max_depth=depth)

scores = cross_val_score(model, X, y, cv=5)

print(f"depth={depth}: {scores.mean():.3f}")

depth=2: 0.78 ← Underfitting

depth=3: 0.85

depth=5: 0.88 ← Sweet spot

depth=10: 0.85

depth=None: 0.75 ← Overfitting

What to Report

When presenting your model, always report:

| Metric | Why |

|---|---|

| Training accuracy | Shows if model learns |

| Validation accuracy | Shows if model generalizes |

| Test accuracy | The honest final score |

| Standard deviation | Shows reliability |

Red Flags to Watch For

| Observation | Problem | Solution |

|---|---|---|

| Train = 99%, Test = 60% | Overfitting | Simpler model, more data |

| Train = 55%, Test = 50% | Underfitting | Complex model, more features |

| Huge variance in CV scores | Unstable model | More data, simpler model |

| Test score much better than val | Data leakage! | Check your pipeline |

Summary: The Key Ideas

-

Overfitting = memorizing, not learning

- High training accuracy, low test accuracy

-

Underfitting = too simple to learn

- Low training AND test accuracy

-

Train/Validation/Test split is essential

- Never use test set for model selection!

-

Cross-validation gives reliable estimates

- Use

cross_val_scorein sklearn

- Use

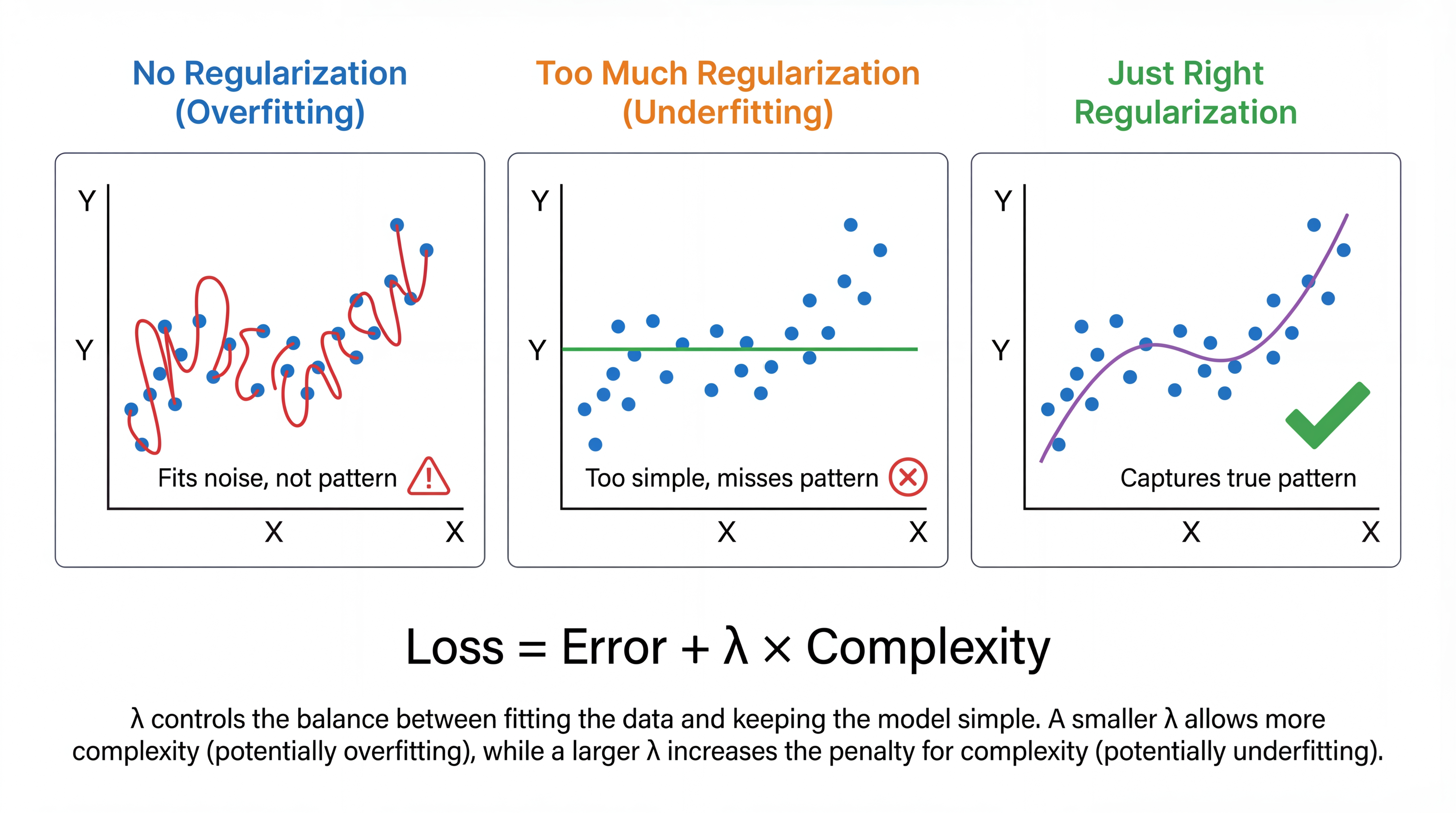

Regularization: Preventing Overfitting

What is Regularization?

Regularization = Add a penalty for complexity

| λ (lambda) | Effect |

|---|---|

| λ = 0 | No regularization → may overfit |

| λ small | Light penalty → slight smoothing |

| λ large | Heavy penalty → may underfit |

λ is a hyperparameter you tune!

Regularization Intuition

Think of it like a budget constraint:

| Without Regularization | With Regularization |

|---|---|

| "Use as many weights as you want!" | "Each weight costs you!" |

| Model goes wild, memorizes | Model stays simple, generalizes |

Analogy: Writing an essay

- No limit → rambling, covers every detail

- Word limit → focused, captures key points

Regularization forces the model to be efficient with its parameters!

Types of Regularization

| Type | Formula | Effect |

|---|---|---|

| Ridge (L2) | Shrinks all weights toward zero | |

| Lasso (L1) | Makes some weights exactly zero | |

| Elastic Net | Both L1 + L2 | Combines benefits |

from sklearn.linear_model import Ridge, Lasso

model = Ridge(alpha=1.0) # L2: all features kept, smaller weights

model = Lasso(alpha=0.1) # L1: some features removed entirely

When to Use Regularization?

| Situation | Recommendation |

|---|---|

| Many features, little data | Strong regularization |

| Few features, lots of data | Light or no regularization |

| Features highly correlated | Ridge (L2) works better |

| Want feature selection | Lasso (L1) |

| Neural networks | Use Dropout or L2 |

Almost always use some regularization — it rarely hurts!

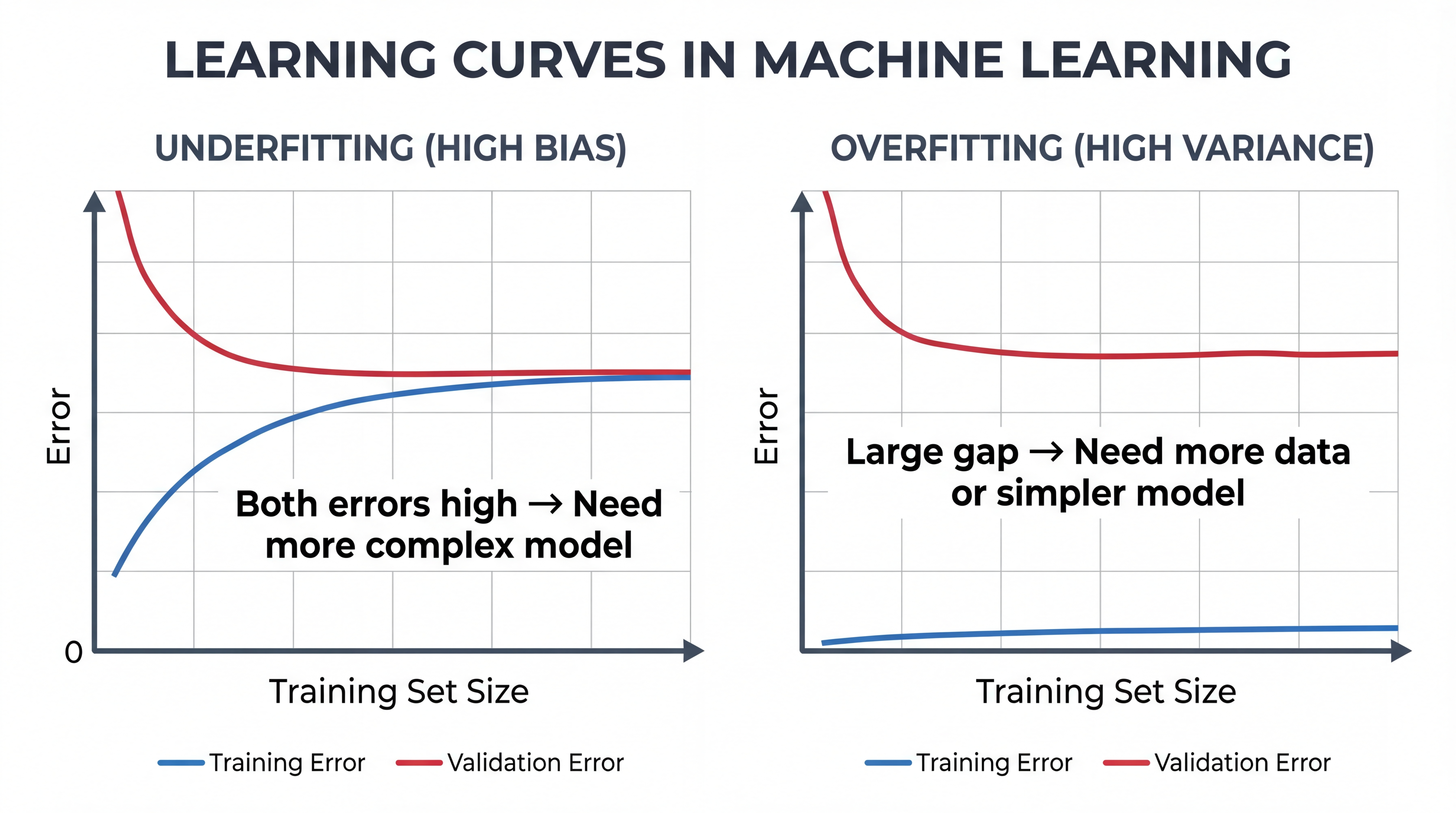

Learning Curves: Diagnosing Problems

Reading Learning Curves

| Pattern | Diagnosis | Solution |

|---|---|---|

| Both high, converge | Underfitting | More features, complex model |

| Train low, Val high, gap | Overfitting | More data, regularization |

| Both low, converge | Good fit! | You're done |

from sklearn.model_selection import learning_curve

train_sizes, train_scores, val_scores = learning_curve(

model, X, y, cv=5, train_sizes=[0.2, 0.4, 0.6, 0.8, 1.0]

)

Grid Search: Automated Tuning

Instead of manually trying hyperparameters:

from sklearn.model_selection import GridSearchCV

param_grid = {

'max_depth': [3, 5, 10, None],

'min_samples_split': [2, 5, 10]

}

grid_search = GridSearchCV(

DecisionTreeClassifier(),

param_grid,

cv=5,

scoring='accuracy'

)

grid_search.fit(X_train, y_train)

print(f"Best params: {grid_search.best_params_}")

print(f"Best score: {grid_search.best_score_:.3f}")

What We Skipped (Advanced Topics)

These are important but more advanced:

| Topic | What It Is |

|---|---|

| Bias-Variance Tradeoff | Mathematical view of underfitting/overfitting |

| Ensemble Methods | Combining multiple models (Random Forest, etc.) |

| Bayesian Optimization | Smarter hyperparameter search |

| Early Stopping | Stop training when validation error increases |

You'll learn these in advanced ML courses!

Code Summary

# Split data

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.2, random_state=42

)

# Cross-validation comparison

from sklearn.model_selection import cross_val_score

scores = cross_val_score(model, X_train, y_train, cv=5)

print(f"CV Score: {scores.mean():.3f} ± {scores.std():.3f}")

# Final evaluation (only once!)

final_score = model.score(X_test, y_test)

PyTorch: Data Splitting

from torch.utils.data import random_split, DataLoader

# Assume dataset has 1000 samples

dataset = MyDataset(...)

# Split: 60% train, 20% val, 20% test

train_size = int(0.6 * len(dataset))

val_size = int(0.2 * len(dataset))

test_size = len(dataset) - train_size - val_size

train_set, val_set, test_set = random_split(

dataset, [train_size, val_size, test_size]

)

PyTorch: DataLoaders

# Create DataLoaders for batching

train_loader = DataLoader(train_set, batch_size=32, shuffle=True)

val_loader = DataLoader(val_set, batch_size=32, shuffle=False)

test_loader = DataLoader(test_set, batch_size=32, shuffle=False)

# Training loop

for epoch in range(num_epochs):

for X_batch, y_batch in train_loader:

# Train on batch

...

# Validate after each epoch

for X_batch, y_batch in val_loader:

# Compute validation loss

...

PyTorch: K-Fold Cross-Validation

from sklearn.model_selection import KFold

kfold = KFold(n_splits=5, shuffle=True)

for fold, (train_idx, val_idx) in enumerate(kfold.split(dataset)):

train_subset = Subset(dataset, train_idx)

val_subset = Subset(dataset, val_idx)

train_loader = DataLoader(train_subset, batch_size=32)

val_loader = DataLoader(val_subset, batch_size=32)

# Train and evaluate for this fold

score = train_and_evaluate(model, train_loader, val_loader)

print(f"Fold {fold}: {score:.3f}")

Questions?

Next Lecture: Neural Networks

From linear models to deep learning!

Test

Test  → Too complex

→ Too complex