Code

import numpy as npimport matplotlib.pyplot as pltimport tensorflow as tfimport seaborn as snsimport tensorflow_probability as tfpimport pandas as pd= tfp.distributions= tfp.layersfrom tensorflow.keras.models import Sequentialfrom tensorflow.keras.layers import Densefrom tensorflow.keras.optimizers import RMSpropfrom tensorflow.keras.callbacks import Callback= 'talk' ,font_scale= 1 )% matplotlib inline% config InlineBackend.figure_format= 'retina'

Code

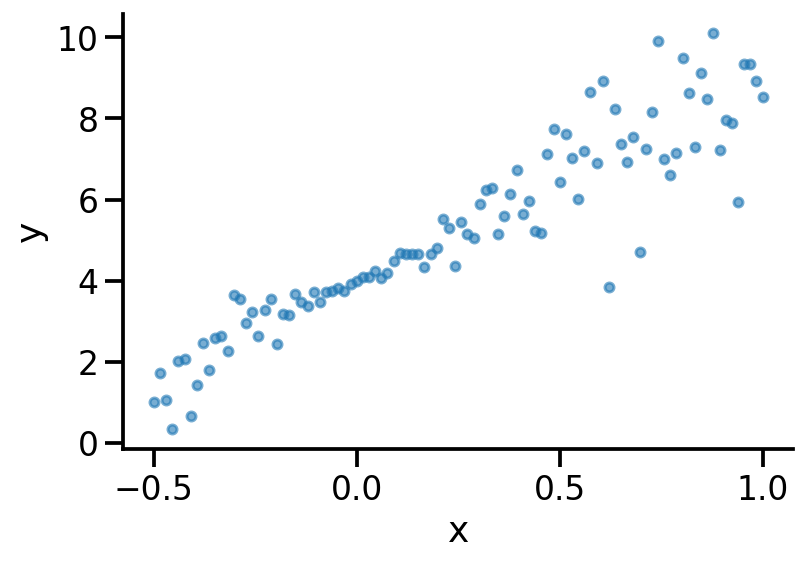

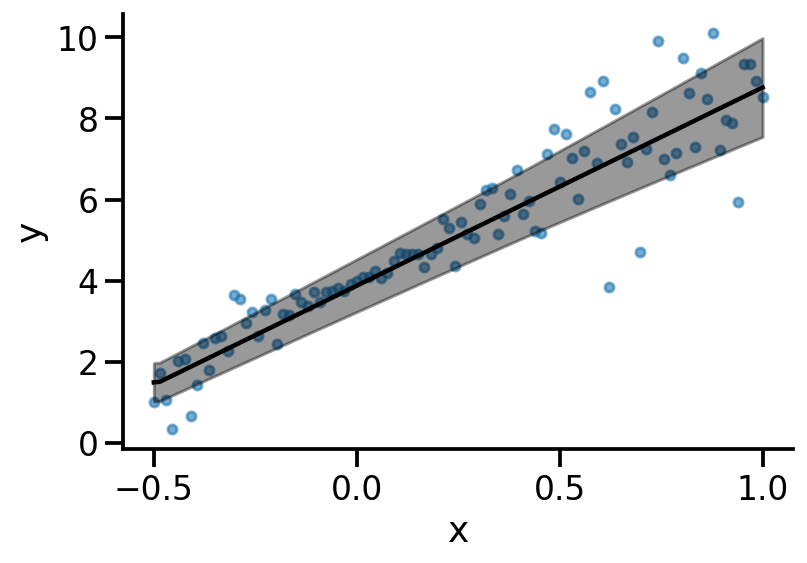

42 )= np.linspace(- 0.5 , 1 , 100 )= 5 * x + 4 + 2 * np.multiply(x, np.random.randn(100 ))

Code

= 20 , alpha= 0.6 )"x" )"y" )

Model 1: Vanilla Linear Regression

Code

= Sequential([= (1 ,), units= 1 , name= 'D1' )])

2022-02-01 09:37:25.292936: I tensorflow/core/platform/cpu_feature_guard.cc:151] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

Code

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

D1 (Dense) (None, 1) 2

=================================================================

Total params: 2

Trainable params: 2

Non-trainable params: 0

_________________________________________________________________

Code

compile (loss= 'mse' , optimizer= 'adam' )= 4000 , verbose= 0 )

<keras.callbacks.History at 0x1a36573a0>

Code

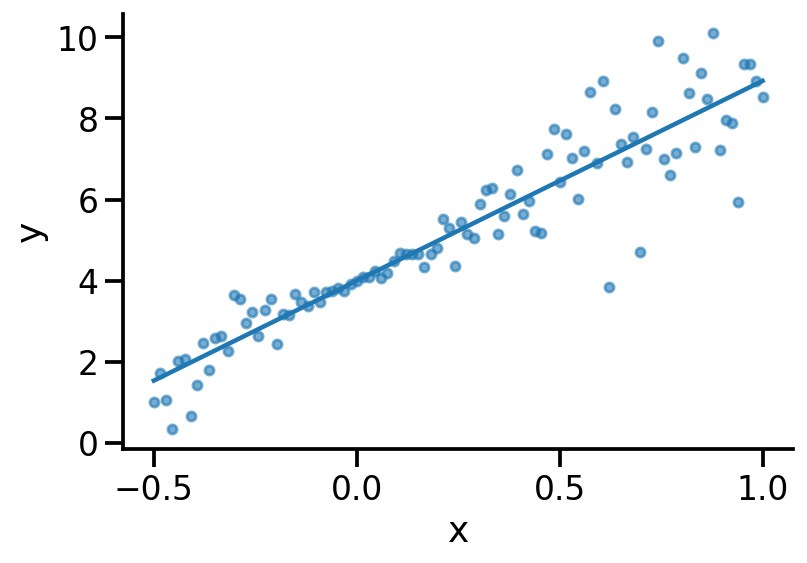

'D1' ).weights

[<tf.Variable 'D1/kernel:0' shape=(1, 1) dtype=float32, numpy=array([[4.929521]], dtype=float32)>,

<tf.Variable 'D1/bias:0' shape=(1,) dtype=float32, numpy=array([3.997371], dtype=float32)>]

Code

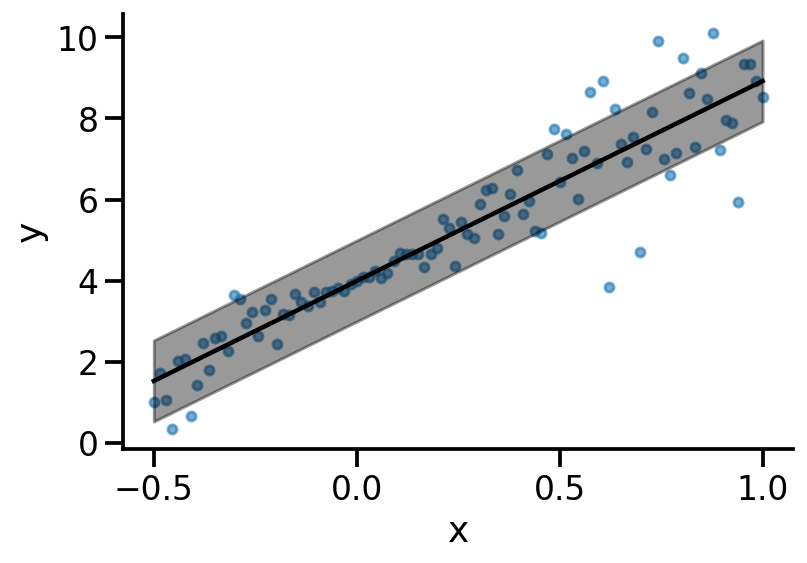

= 20 , alpha= 0.6 )"x" )"y" )= model.predict(x)

Model 2

Code

= Sequential([= (1 ,), units= 1 , name= 'M2_D1' ),lambda loc: tfd.Normal(loc= loc, scale= 1. ), name= 'M2_Likelihood' )])

2022-02-01 09:37:33.529583: W tensorflow/python/util/util.cc:368] Sets are not currently considered sequences, but this may change in the future, so consider avoiding using them.

Code

Model: "sequential_1"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

M2_D1 (Dense) (None, 1) 2

M2_Likelihood (Distribution ((None, 1), 0

Lambda) (None, 1))

=================================================================

Total params: 2

Trainable params: 2

Non-trainable params: 0

_________________________________________________________________

Code

= model_2.get_layer('M2_D1' ).weights

Code

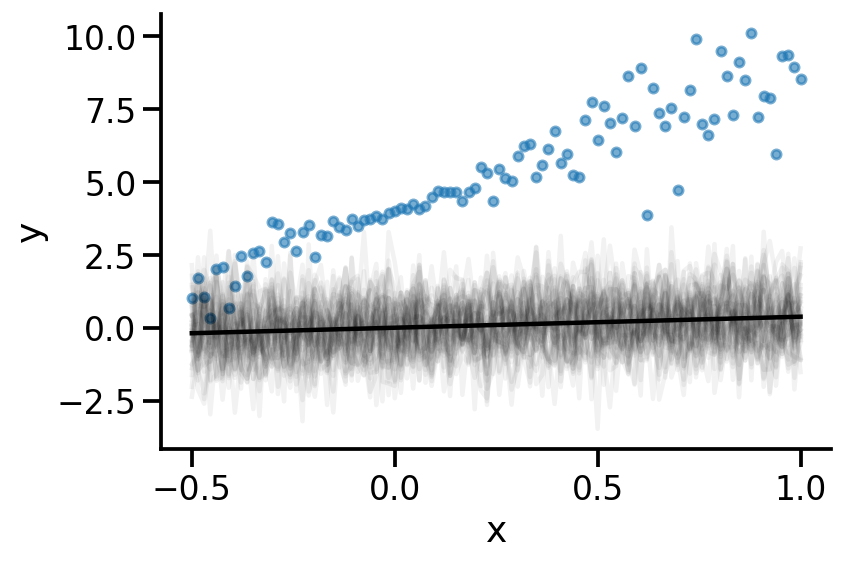

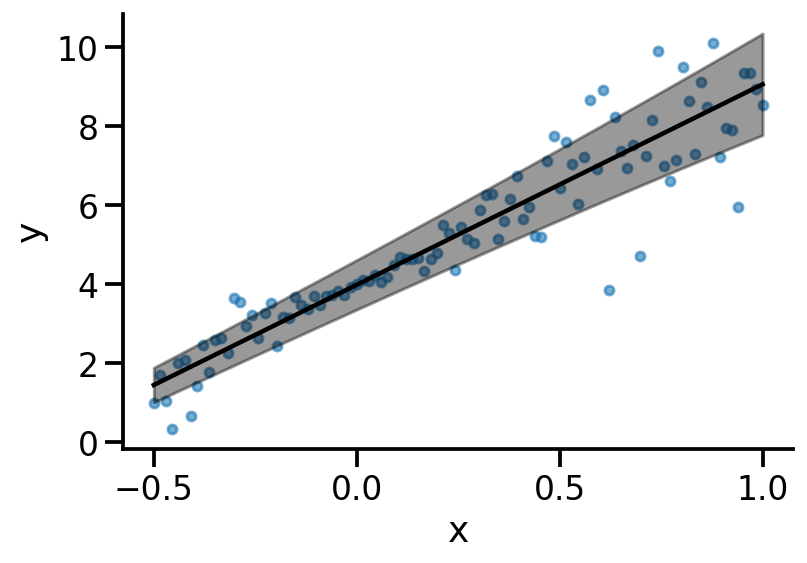

= 20 , alpha= 0.6 )"x" )"y" )= model_2(x)40 ).numpy()[:, :, 0 ].T, color= 'k' , alpha= 0.05 ); = 'k' )

Code

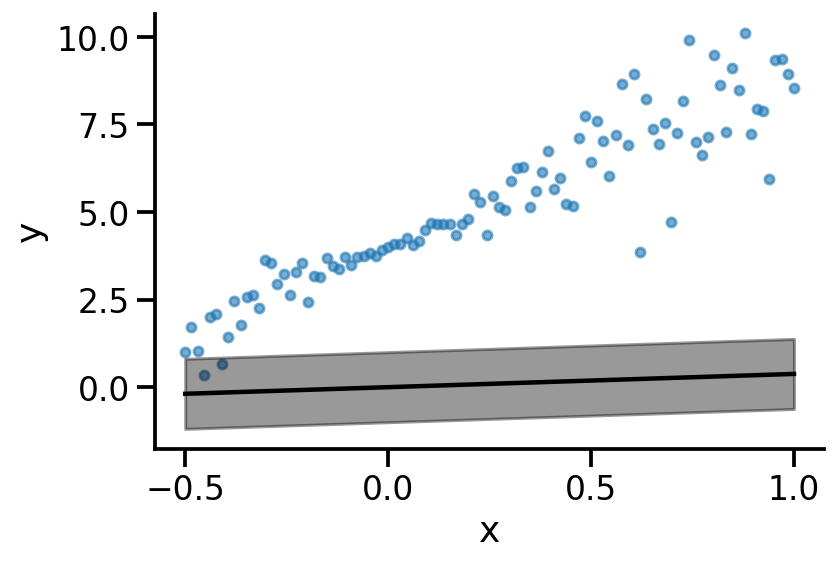

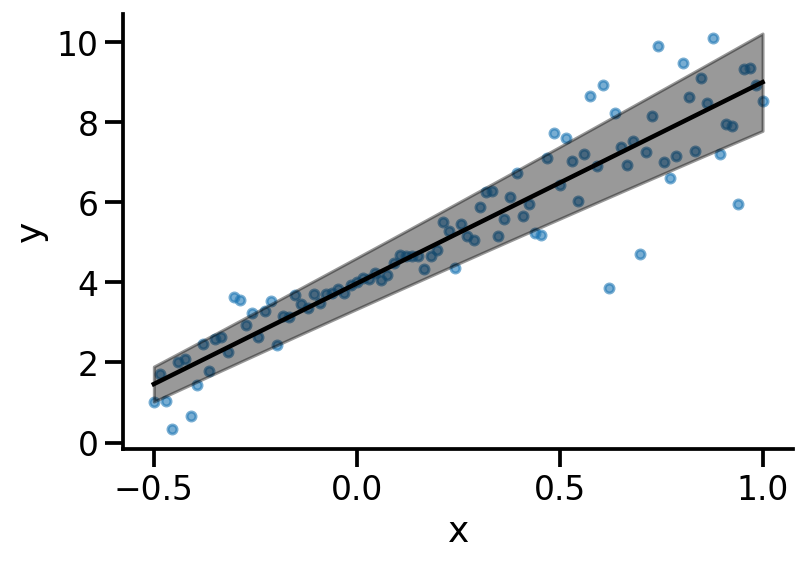

def plot(model):= 20 , alpha= 0.6 )"x" )"y" )= model(x)= m.stddev().numpy().flatten()= m.mean().numpy().flatten()= 'k' )- m_s, m_m+ m_s, color= 'k' , alpha= 0.4 )

Code

def nll(y_true, y_pred):# y_pred is distribution return - y_pred.log_prob(y_true)

Code

compile (loss= nll, optimizer= 'adam' )= 4000 , verbose= 0 )

<keras.callbacks.History at 0x1a3b96880>

Model 3

Code

= Sequential([= (1 ,), units= 2 , name= 'M3_D1' ),lambda t: tfd.Normal(loc= t[..., 0 ], scale= tf.exp(t[..., 1 ])), name= 'M3_Likelihood' )])

Code

'M3_D1' ).weights

[<tf.Variable 'M3_D1/kernel:0' shape=(1, 2) dtype=float32, numpy=array([[0.04476571, 0.55212975]], dtype=float32)>,

<tf.Variable 'M3_D1/bias:0' shape=(2,) dtype=float32, numpy=array([0., 0.], dtype=float32)>]

Code

compile (loss= nll, optimizer= 'adam' )= 4000 , verbose= 0 )

<keras.callbacks.History at 0x1a3d20190>

Good reference https://tensorchiefs.github.io/bbs/files/21052019-bbs-Beate-uncertainty.pdf

At this point, we see that scale or sigma is a linear function of input x.

Code

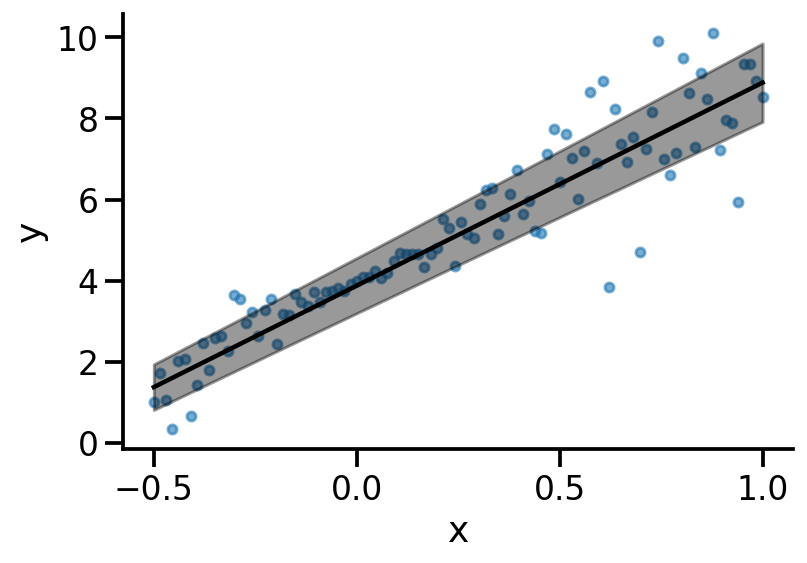

= Sequential([= (1 ,), units= 2 , name= 'M4_D1' , activation= 'relu' ),= 2 , name= 'M4_D2' , activation= 'relu' ),= 2 , name= 'M4_D3' ),lambda t: tfd.Normal(loc= t[..., 0 ], scale= tf.math.softplus(t[..., 1 ])), name= 'M4_Likelihood' )])

Code

compile (loss= nll, optimizer= 'adam' )= 4000 , verbose= 0 )

<keras.callbacks.History at 0x1a3cd0a60>

Model 5

Follow: https://juanitorduz.github.io/tfp_lm/

Model 6

Dense Variational

Code

def prior(kernel_size, bias_size, dtype= None ):= kernel_size + bias_size# Independent Normal Distribution return lambda t: tfd.Independent(tfd.Normal(loc= tf.zeros(n, dtype= dtype),= 1 ),= 1 )def posterior(kernel_size, bias_size, dtype= None ):= kernel_size + bias_sizereturn Sequential([= dtype),

Code

= len (x)= Sequential([# Requires posterior and prior distribution # Add kl_weight for weight regularization #tfl.DenseVariational(16, posterior, prior, kl_weight=1/N, activation='relu', input_shape=(1, )), 2 , posterior, prior, kl_weight= 1 / N, input_shape= (1 ,)),1 )

Code

compile (loss= nll, optimizer= 'adam' )

Code

= 5000 , verbose= 0 )

<keras.callbacks.History at 0x1a61a9ee0>

Code

= len (x)= Sequential([# Requires posterior and prior distribution # Add kl_weight for weight regularization 16 , posterior, prior, kl_weight= 1 / N, activation= 'relu' , input_shape= (1 , )),2 , posterior, prior, kl_weight= 1 / N),1 )compile (loss= nll, optimizer= 'adam' )

Code

= 5000 , verbose= 0 )

<keras.callbacks.History at 0x1a669da90>

https://livebook.manning.com/book/probabilistic-deep-learning-with-python/chapter-8/123