def resolve_image_path(name):

candidates = [Path(name), Path("posts") / name]

for path in candidates:

if path.exists():

return path

raise FileNotFoundError(name)

def load_image_local(name):

return Image.open(resolve_image_path(name)).convert("RGB")

def xyxy_from_center_size(x, y, w, h, image_w, image_h):

cx, cy = x * image_w, y * image_h

bw, bh = w * image_w, h * image_h

return [cx - bw / 2, cy - bh / 2, cx + bw / 2, cy + bh / 2]

def mask_to_measurements(mask):

mask = np.asarray(mask).astype(bool)

ys, xs = np.nonzero(mask)

if len(xs) == 0:

raise ValueError("Empty mask")

return {

"xyxy": [float(xs.min()), float(ys.min()), float(xs.max()), float(ys.max())],

"centroid": [float(xs.mean()), float(ys.mean())],

"area_px": int(mask.sum()),

}

def falcon_detection_to_object(det, label, image_size):

width, height = image_size

if "mask" in det and det["mask"] is not None:

base = mask_to_measurements(det["mask"])

else:

xyxy = xyxy_from_center_size(

det["xy"]["x"], det["xy"]["y"], det["hw"]["w"], det["hw"]["h"], width, height

)

base = {

"xyxy": xyxy,

"centroid": [float((xyxy[0] + xyxy[2]) / 2), float((xyxy[1] + xyxy[3]) / 2)],

"area_px": int(max(0.0, xyxy[2] - xyxy[0]) * max(0.0, xyxy[3] - xyxy[1])),

}

return {**base, "label": label}

def choose_primary(objects, label):

matches = [obj for obj in objects if obj["label"] == label]

if not matches:

raise ValueError(f"No objects found for {label!r}")

return max(matches, key=lambda obj: obj["area_px"])

def objects_to_detections(objects):

if not objects:

return sv.Detections(

xyxy=np.zeros((0, 4), dtype=np.float32),

class_id=np.array([], dtype=int),

data={"class_name": []},

)

label_to_id = {label: idx for idx, label in enumerate(sorted({obj["label"] for obj in objects}))}

xyxy = np.array([obj["xyxy"] for obj in objects], dtype=np.float32)

class_id = np.array([label_to_id[obj["label"]] for obj in objects], dtype=int)

class_name = [obj["label"] for obj in objects]

return sv.Detections(xyxy=xyxy, class_id=class_id, data={"class_name": class_name})

BOX_ANNOTATOR = sv.BoxAnnotator(thickness=2, color_lookup=sv.ColorLookup.CLASS)

LABEL_ANNOTATOR = sv.LabelAnnotator(

text_scale=0.45,

text_padding=4,

color_lookup=sv.ColorLookup.CLASS,

)

def render_annotated_scene(image, objects, show_labels=True):

scene = np.array(image.convert("RGB")).copy()

if not objects:

return scene

detections = objects_to_detections(objects)

scene = BOX_ANNOTATOR.annotate(scene=scene, detections=detections)

if show_labels:

labels = [obj["label"] for obj in objects]

scene = LABEL_ANNOTATOR.annotate(scene=scene, detections=detections, labels=labels)

return scene

def _overlay_text(ax, x, y, text, ha="left"):

ax.text(

x,

y,

text,

ha=ha,

va="top",

color="white",

fontsize=11,

bbox={"facecolor": "black", "alpha": 0.65, "pad": 4},

)

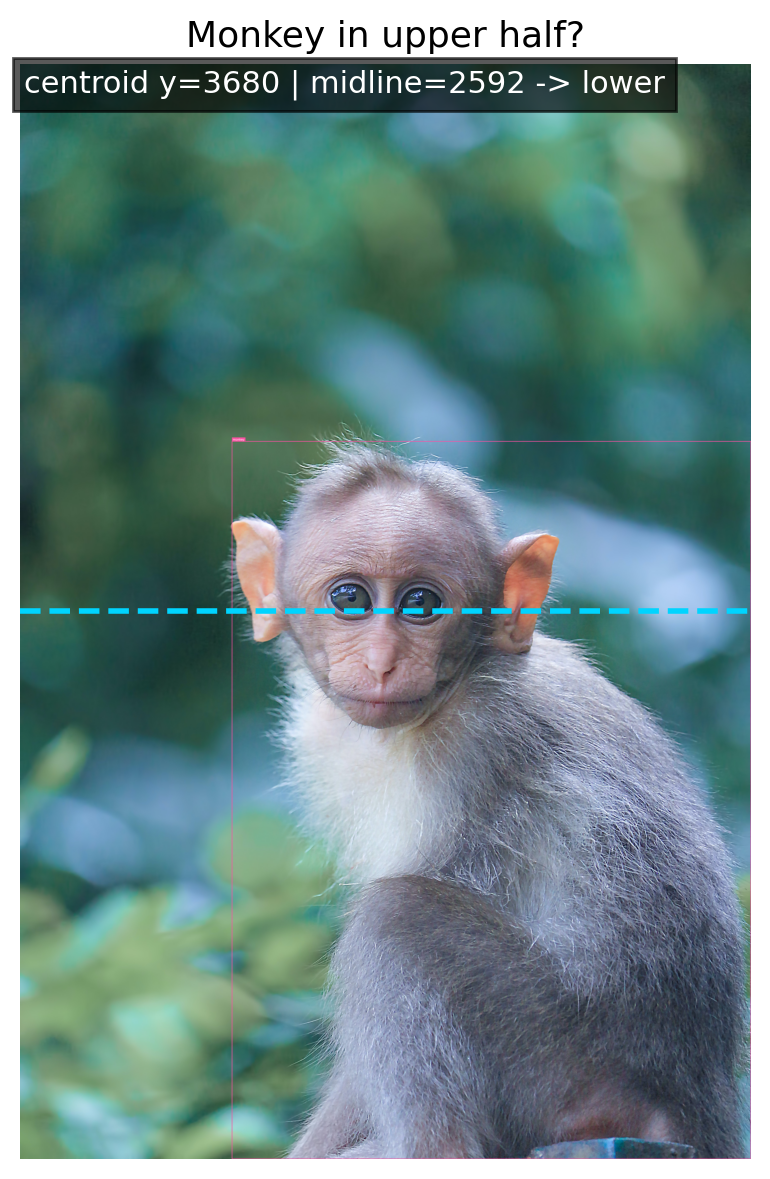

def plot_result_scene(result):

measurement = result["measurement"]

analysis = measurement["analysis"]

focus_objects = result["focus_objects"]

show_labels = len(focus_objects) <= 8

scene = render_annotated_scene(result["image"], focus_objects, show_labels=show_labels)

fig, ax = plt.subplots(figsize=(10, 6))

ax.imshow(scene)

if analysis == "count_by_half":

split_x = measurement["split_x"]

ax.axvline(split_x, color="#00d4ff", linestyle="--", linewidth=2)

_overlay_text(ax, split_x - 14, 26, f"left: {measurement['left_count']}", ha="right")

_overlay_text(ax, split_x + 14, 26, f"right: {measurement['right_count']}")

elif analysis == "width_threshold":

_overlay_text(

ax,

18,

26,

f"width fraction = {measurement['width_fraction']:.4f} vs threshold {measurement['threshold']:.4f}",

)

elif analysis == "edge_margin_threshold":

obj = focus_objects[0]

x1, y1, x2, y2 = obj["xyxy"]

edge = measurement["edge"]

y_mid = (y1 + y2) / 2

if edge == "right":

ax.hlines(y_mid, x2, result["image"].size[0], colors="#ff4d6d", linewidth=3)

_overlay_text(

ax,

result["image"].size[0] - 18,

y_mid - 24,

f"right margin {measurement['margin_px']} px vs threshold {measurement['threshold_px']} px",

ha="right",

)

elif edge == "left":

ax.hlines(y_mid, 0, x1, colors="#00d4ff", linewidth=3)

_overlay_text(ax, 18, y_mid - 24, f"left margin {measurement['margin_px']} px vs threshold {measurement['threshold_px']} px")

elif analysis == "centered_square_crop":

crop_x1, crop_y1, crop_x2, crop_y2 = measurement["square_crop_xyxy"]

rect = patches.Rectangle(

(crop_x1, crop_y1),

crop_x2 - crop_x1,

crop_y2 - crop_y1,

linewidth=3,

edgecolor="#00d4ff",

facecolor="none",

linestyle="--",

)

ax.add_patch(rect)

_overlay_text(ax, crop_x1 + 18, crop_y1 + 34, "centered square crop")

ax.set_title(result["task"]["name"], fontsize=13)

ax.axis("off")

plt.tight_layout()

plt.show()

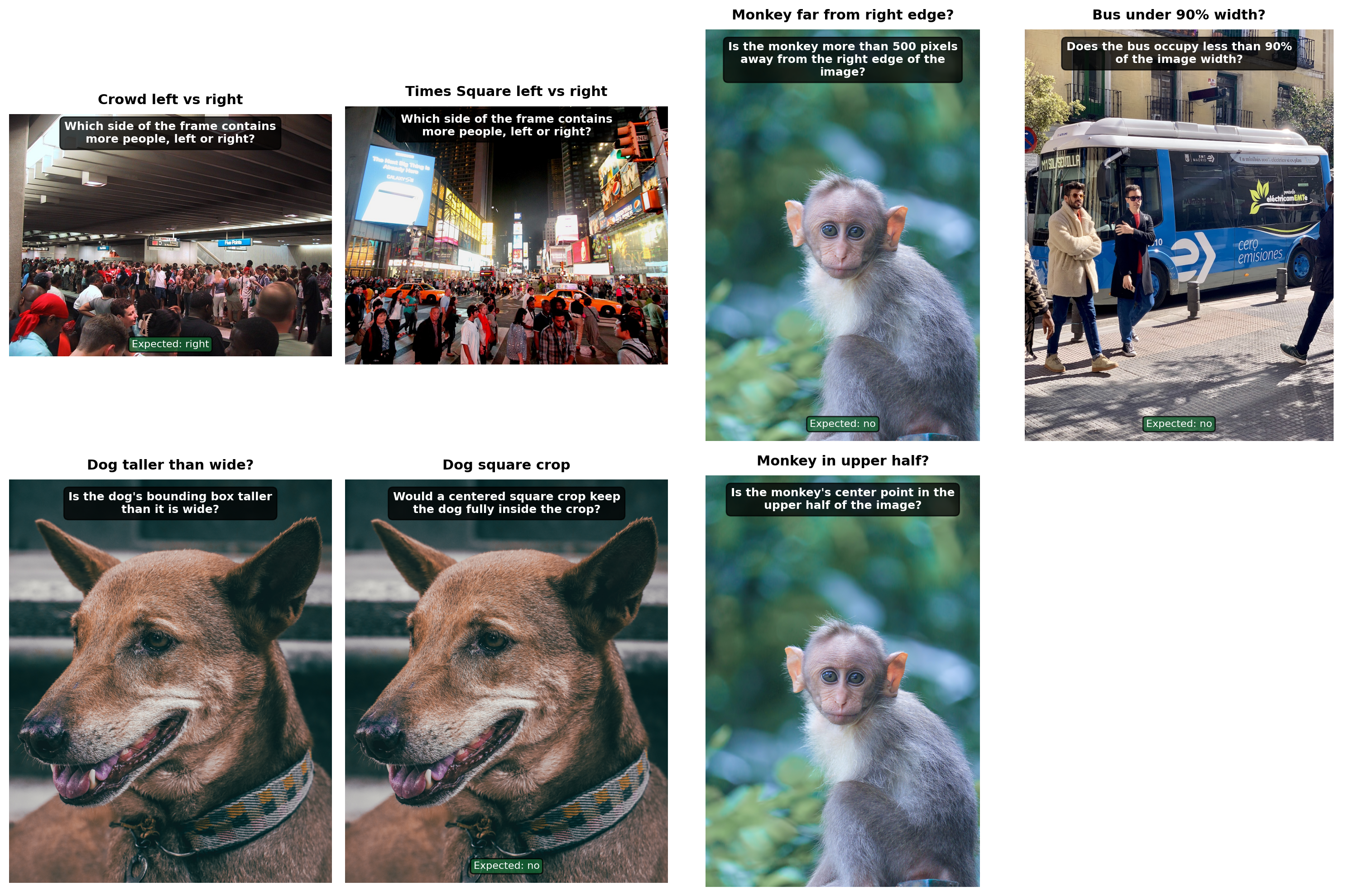

def show_task_gallery(tasks):

fig, axes = plt.subplots(2, 2, figsize=(12, 8))

for ax, task in zip(axes.flat, tasks):

ax.imshow(load_image_local(task["image"]))

ax.set_title(task["name"], fontsize=11)

ax.set_xlabel(task["question"], fontsize=9)

ax.axis("off")

plt.tight_layout()

plt.show()

def show_task_gallery_extended(tasks):

"""Show task gallery with question overlaid on each image."""

import textwrap

n = len(tasks)

cols = min(n, 4)

rows = (n + cols - 1) // cols

fig, axes = plt.subplots(rows, cols, figsize=(15, 5 * rows))

if rows == 1 and cols == 1:

axes = [axes]

elif rows == 1:

axes = list(axes)

else:

axes = list(axes.flat)

for i, task in enumerate(tasks):

img = load_image_local(task['image'])

axes[i].imshow(img)

# Overlay question at the top of the image

q = textwrap.fill(task['question'], width=35)

axes[i].text(

0.5, 0.97, q,

transform=axes[i].transAxes, fontsize=9, fontweight='bold',

color='white', ha='center', va='top',

bbox=dict(boxstyle='round,pad=0.4', facecolor='black', alpha=0.75),

)

# Overlay expected answer at bottom

exp = task.get('expected')

if exp is not None:

exp_str = 'yes' if exp is True else 'no' if exp is False else str(exp)

axes[i].text(

0.5, 0.03, f'Expected: {exp_str}',

transform=axes[i].transAxes, fontsize=8,

color='white', ha='center', va='bottom',

bbox=dict(boxstyle='round,pad=0.3', facecolor='#166534', alpha=0.8),

)

axes[i].set_title(task['name'], fontsize=11, fontweight='bold', pad=8)

axes[i].axis('off')

for j in range(n, len(axes)):

axes[j].axis('off')

plt.tight_layout()

plt.show()